Artificial intelligence, or “AI” as it’s popularly called, has arrived and we are seeing new evidence of its impact on our daily lives. Without a way to know what is fake or not, we are in for a rough media sleigh ride in the years ahead.

James Earl Jones, the 91-year-old actor who has been the voice of Darth Vader in Star Wars films for about 50 years, is now allowing AI synthetic speech technology to recreate his younger voice from his previous films for future projects by Lucasfilm.

Lucasfilm is employing Respeecher, a Ukrainian startup that uses AI technology to craft new conversations from revitalized old voice recordings. It is good enough quality that Jones’ younger voice can go on forever.

Screen actor Bruce Willis has allowed Deepcake, another AI firm, to create his digital twin for an ad campaign. Deepcake’s digital-twin technology allows actors to virtually create their likeness on screen without the need to physically appear in front of the camera. In Willis’ case, the actor was diagnosed with aphasia, which impacted his cognitive abilities, and has retired from acting.

AI has a wide footprint today — from photo and video editing applications to on-screen imagery. Though it is being demonstrated and promoted as a useful tool for image makers, one does not have to use a lot of imagination to see how it can be used for nefarious purposes.

“Historically, people trust what they see,” Wael Abd-Almageed, a professor at the University of Southern California’s school of engineering, told the Washington Post. “Once the line between truth and fake is eroded, everything will become fake. We will not be able to believe anything.”

That is the problem in a nutshell. Think of presidential campaigns. The image of a candidate, who looks very real, can be made to say the most outrageous things. Social media posts can show fake images of well-known people saying or doing just about anything — from reinforcing racial stereotypes to plagiarizing the work of well-known artists. The results, in the wrong hands, could be devastating.

Using AI is easier than one might think. An AI text-to-image generator called DALL-E (for Salvador Dali and Pixar’s WALL-E), creates images based on simple text slogans. The technology is very good, creating original and deceptively accurate images from any phrase. Demos have startled many, with 1.5 million users now generating two million images a day.

Using AI is easier than one might think. An AI text-to-image generator called DALL-E (for Salvador Dali and Pixar’s WALL-E), creates images based on simple text slogans. The technology is very good, creating original and deceptively accurate images from any phrase. Demos have startled many, with 1.5 million users now generating two million images a day.

Like most new technology, AI was invented before a way was found to control it. The technology is now on fire. It is growing so fast that no one has figured out yet how to prevent is from being used in evil ways. It’s no doubt the first big AI disaster is going to happen. It’s just a matter of when and where.

One AI system is OpenAI Codex, which translates natural language to code. From it comes DALL·E 2, new AI technology that can create realistic images and art from a description in natural language commands

OpenAI says it has tried to balance its drive to be first with AI without accelerating the dangers of it being used to create disinformation. One way is OpenAI is prohibiting images of celebrities or politicians being used. Good luck with that!

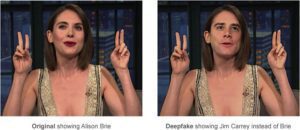

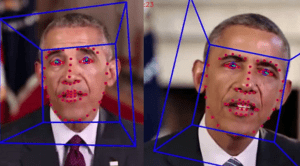

Advances in AI have given rise to deepfakes, a term that covers all AI-synthesized media. These include doctored videos where a person’s head is placed on another’s body to realistic images of people that don’t exist.

Advances in AI have given rise to deepfakes, a term that covers all AI-synthesized media. These include doctored videos where a person’s head is placed on another’s body to realistic images of people that don’t exist.

When deepfakes first emerged, some thought they would first be deployed to undermine politics. But that hasn’t happened so far. “The technology has been primarily used to victimize women by creating deepfake pornography without their consent,” said Danielle Citron, a law professor at the University of Virginia and author of the upcoming book, The Fight for Privacy, in an interview with the Washington Post.

Both deepfakes and text-to-image generators are powered by an AI method called deep learning. It relies on artificial neural networks that mimic the neurons of the human brain. These image generators allow the creation of English or uploaded images that allows humans to speak and communicate.

OpenAI wanted its AI to act as a safeguard against exploitive use by monopolistic corporations or foreign governments. For that reason, it built in filters, blocks and a flagging system. For example, if a celebrity or politicians name is entered, there’s a flag. Also, certain words trigger a warning against images about politics, sex or violence.

“There’s just a severe lack of legislation that limits the negative or harmful usage of technology,” AI researcher Maarten Sap told the Washington Post. “The United States is really behind on that stuff.”

“There’s just a severe lack of legislation that limits the negative or harmful usage of technology,” AI researcher Maarten Sap told the Washington Post. “The United States is really behind on that stuff.”

Laws about deepfakes are still iffy. There is no federal law in the United States preventing distribution of deepfakes. California and Virginia make it illegal to distribute fake material. China has criminalized deepfakes, while with social media, it is up to each company on how to stop deepfakes.

As convenient as AI is to videographers, there is a dark side that cannot be ignored. Not only will it take some un-invented technology to control it, but it will take a new level of media education for the masses not to be fooled by it. A big task ahead!

Visit the Dall.E 2 website: openai.com/dall-e-2/

Beacham has served as a staff reporter and editor for United Press International, the Miami Herald, Gannett Newspapers and Post-Newsweek. His articles have appeared in the Los Angeles Times, Washington Post, the Village Voice and The Oxford American.

Beacham’s books, Whitewash: A Southern Journey through Music, Mayhem & Murder and The Whole World Was Watching; My Life Under the Media

Microscope are currently in publication. Two of his stories are currently being developed for television.

In 1985, Beacham teamed with Orson Welles over a six month period to develop a one-man television special. Orson Welles Solo was canceled after Mr. Welles died on the day principal photography was to begin.

In 1999, Frank Beacham was executive producer of Tim Robbins’ Touchstone feature film, Cradle Will Rock. His play, Maverick, about video with Orson Welles, was staged off-Broadway in New York City in 2019.

- Using Bluetooth Timecode Systems in the Video Workflow - February 15, 2024

- The Shape of Your Video Camera - February 15, 2024

- Advance Planning: How to Stay on a Video Production Budget - February 1, 2024